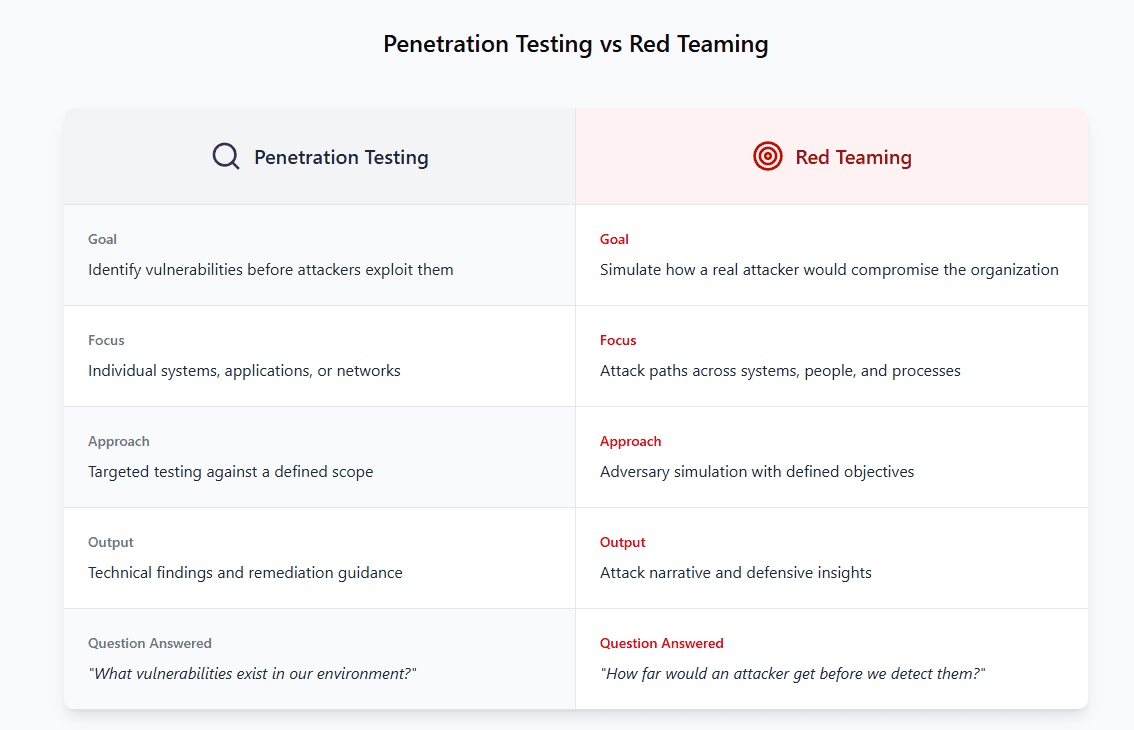

Understanding how adversary simulation differs from traditional penetration testing.

Most organizations assume they would notice if an attacker made it into their environment.

The reasoning usually sounds reasonable. Logging is enabled, alerts are configured, and a SOC is watching dashboards. On paper the controls are there.

But controls that look good in architecture diagrams don’t always behave the way people expect during an attack.

That’s the gap red teaming is meant to expose.

Red team engagements aren’t about generating long lists of vulnerabilities. The goal is to simulate how a real attacker would approach your organization and observe how your defenses actually respond.

The uncomfortable question red teaming answers is simple:

If someone deliberately targeted your organization, how far would they get before anyone noticed?

What Attack Paths Actually Look Like

Real attackers rarely compromise an organization with a single vulnerability.

In most engagements, the path starts with something small. A misconfiguration, an exposed service, a weak credential, sometimes even something that showed up in a previous pentest report.

Individually those issues don’t always look critical.

Combined, they can create a path.

During red team engagements we often see attackers chaining together several small weaknesses, moving step by step through the environment until they reach something valuable.

That might involve things like:

Accessing sensitive data

Compromising internal systems

Escalating privileges to administrative control

Moving laterally across the network

Avoiding detection from monitoring tools

At that point the question isn’t just “Does this vulnerability exist?”

The real question becomes:

Would your defenses actually stop someone from progressing?

Detection and Response Are the Real Test

In a traditional penetration test, the success metric is straightforward. The testers find vulnerabilities, document them, and help the organization understand how to fix them.

A red team engagement measures something different.

It measures how the security program performs when someone is actively trying to bypass it.

During an engagement, we often look at questions like:

Did anyone detect the activity?

Did monitoring systems generate alerts?

Did those alerts reach the right team?

Did incident response procedures activate?

How long did it take before someone investigated?

Sometimes the most valuable insight from a red team engagement isn’t the vulnerability itself. It’s discovering that an attacker could move through several systems before anyone noticed.

In one engagement, initial access came from a low-risk issue that had already appeared in a previous pentest report. It hadn’t been considered critical because it required multiple steps to exploit. Once we had that foothold, however, it allowed us to move laterally through several internal systems before detection occurred.

Why Some “Red Team” Services Are Really Just Pentests

The term red team gets used pretty loosely in the security industry.

Plenty of services are marketed as red team engagements, but once you look at the details, they behave exactly like a penetration test.

For example, you’ll often see “red team” offerings that:

Follow a narrow technical scope

Focus only on vulnerability discovery

Deliver a standard pentest report with findings and CVSS scores

Never test detection or incident response

There’s nothing wrong with penetration testing. It’s an essential part of a security program.

But simply renaming a pentest doesn’t turn it into adversary simulation.

A true red team engagement is built around realistic attacker behavior and defined objectives. The goal isn’t just to find weaknesses, it’s to see whether an attacker could move through the environment without being stopped.

When Red Teaming Actually Makes Sense

Red teaming tends to provide the most value for organizations that already have a reasonably mature security program.

Typically, that means things like:

Vulnerability management processes are already in place

Security monitoring and alerting tools are deployed

There is some form of SOC or detection capability

Incident response procedures exist

Without those foundations, a red team exercise usually just confirms what a penetration test would already reveal, that attackers can exploit obvious weaknesses.

For many organizations, the practical approach is to start with penetration testing and vulnerability management. Red teaming becomes valuable later when the goal shifts toward validating detection and response.

How to Tell If Your Organization Is Ready for a Red Team Engagement

Not every organization benefits from a red team exercise right away. In practice, red teaming tends to deliver the most value when the fundamentals of a security program are already in place.

Organizations typically see the best results when they already have centralized logging, monitoring tools, and some form of incident detection capability. If a security team is still working through basic vulnerability management or configuration issues, a traditional penetration test will often produce more actionable results.

Red team engagements become valuable once the focus shifts from finding vulnerabilities to validating how well defenses detect and respond to real attacker behavior.

The Question Red Teaming Exists to Answer

At the end of the day, red teaming isn’t about checking a compliance box.

It’s about answering a question every security leader eventually asks:

If someone deliberately targeted our organization, how far would they actually get?

And sometimes the answer to that question reveals far more about a security program than a list of vulnerabilities ever could.

Red Sentry’s red team engagements focus on realistic adversary behavior, helping organizations understand how their defenses perform against real-world attack scenarios.

What Red Teaming Actually Looks Like (And Why It’s Not Just a Bigger Pentest)

What Red Teaming Actually Looks Like (And Why It’s Not Just a Bigger Pentest)

Mar 16, 2026

Mike Shelton

Head of Pentesting